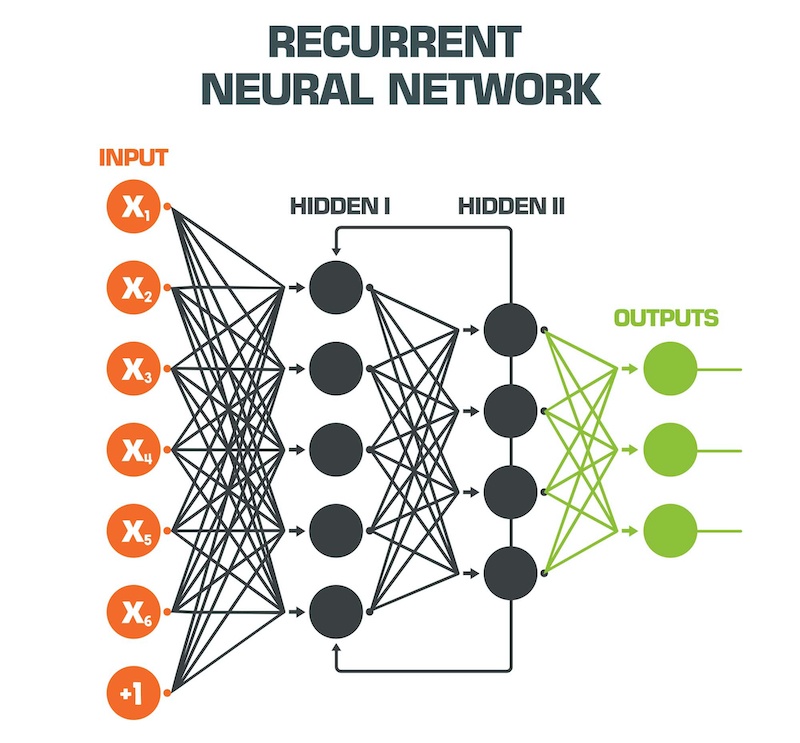

This is known as the vanishing gradient problem, a term that captures how the gradient or force experienced by the RNN parameters vanishes as a function of how long ago did the change happen in the underlying data 11, 12. LSTMs were developed to alleviate the limitation of previously existing RNN architectures wherein they could not learn information originating from far past in time. A specific and extremely popular instance of RNNs are long short-term memory (LSTM) 8 neural networks, which possess more flexibility and can be used for challenging tasks such as language modeling, machine translation, and weather forecasting 6, 9, 10. Recurrent neural networks (RNN) are a machine learning/artificial intelligence (AI) technique developed for modeling temporal sequences, with demonstrated successes including but not limited to modeling human languages 1, 2, 3, 4, 5, 6, 7.

We anticipate that our work represents a stepping stone in the understanding and use of recurrent neural networks for understanding the dynamics of complex stochastic molecular systems. We demonstrate our model’s reliability through different benchmark systems and a force spectroscopy trajectory for multi-state riboswitch. We demonstrate how training the long short-term memory network is equivalent to learning a path entropy, and that its embedding layer, instead of representing contextual meaning of characters, here exhibits a nontrivial connectivity between different metastable states in the underlying physical system. The model captures Boltzmann statistics and also reproduces kinetics across a spectrum of timescales. Our character-level language model learns a probabilistic model of 1-dimensional stochastic trajectories generated from higher-dimensional dynamics. Here we show that recurrent networks, specifically long short-term memory networks can also capture the temporal evolution of chemical/biophysical trajectories. Recurrent neural networks have led to breakthroughs in natural language processing and speech recognition.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed